- This event has passed.

NSF Funds Expedition into Software for Efficient Computing in the Age of Nanoscale Devices

August 19, 2010 @ 3:00 pm - 4:00 pm PDT

NSF Funds Expedition into Software for Efficient Computing in the Age of Nanoscale Devices

August 19 , 2010

Adapted from NSF Press Release:

UC Irvine, along with five other universities, has received a $10 million National Science Foundation (NSF) Expeditions in Computing grant to develop robust software for computing with unreliable, but energy-efficient nanoscale computer components. The Irvine team is led by Chancellor’s Professor Nikil Dutt and Professor Alex Nicolau, computer scientists in the Donald Bren School of Information and Computer Sciences.

Professor Nikil Dutt |

Professor Alex Nicolau |

The two are also affiliated with the Center for Embedded Computer Systems (CECS) and the Irvine division of the California Institute for Telecommunications and Information Technology (Calit2). The NSF Expeditions in Computing program established by NSF’s Directorate for Computer and Information Science and Engineering (CISE) rewards far-reaching agendas that “promise significant advances in the computing frontier and great benefit to society.” The grants represent some of the largest single investments currently made by the directorate and the NSF.

|

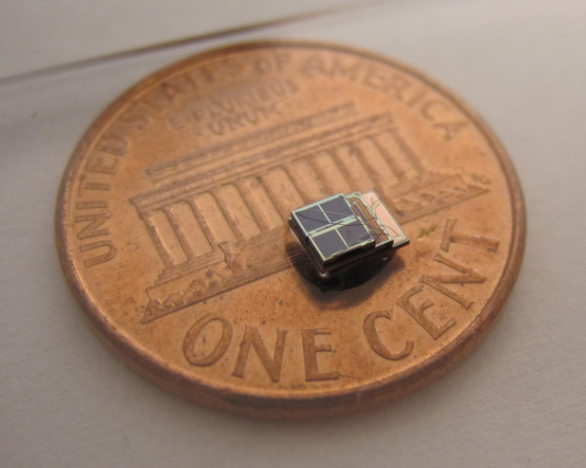

| Millimeter Chip: Sensor processing chips like the smallest solar-powered sensor system, developed in the lab of Variability co-PI Dennis Sylvester at the University of Michigan, is an example of the trend to millimeter-scale devices with nano-scale parts that perform more variably than traditional computer components. |

The five-year grant seeks approaches for developing software applications that are resilient to variabilities emerging from underlying hardware that is becoming increasingly error-prone. Indeed, as semiconductor manufacturers build ever smaller components, the resulting hardware platforms (including circuits and chips) become less reliable and more expensive to produce, thanks to the variability resulting from manufacturing, aging-related wear-out, and varying operating environments. Furthermore, efforts to save energy and operate systems at lower power margins also exacerbate variability through increased hardware errors. Such variabilities are not addressed by today’s mainstream computer and embedded systems which instead require that hardware satisfy unnecessarily stringent requirements and/or use more power than needed. Even with such requirements, existing hardware still occasionally suffer machine crashes which are at best a nuisance and at worst can have catastrophic consequences (e.g., a reboot of an on-board computer that may have been involved in a recent plane-crash). These ill-effects of hardware variability can be remedied with the help of a new generation of software – which the UCI group proposed to investigate through this expedition effort.

|

| Variability JPEGs : In the UCLA lab of Variability co-PI Puneet Gupta, JPEG compression of the same image done with variability-aware software (at right) produced the same result in quality — but using more than 40% less power. |

The research team seeks to develop computing systems (integrated hardware-software packages) that will be able to sense the nature and extent of variations in their hardware circuits, and expose these variations to software, i.e., compilers, operating systems, and applications, so as to drive adaptations in the software to overcome the variability present. “A major goal of this project is to break down the rigid interfaces between software and hardware, so that software can proactively address variability in the underlying hardware,” said Dutt. “The ability of software to detect, monitor, adapt and predict variations in the underlying hardware will be critical to the success of this project, and our team at Irvine will investigate compile-time and run-time software strategies for overcoming hardware variations” added Nicolau.

The multi-university center will be led by Professor Rajesh Gupta at the University of California, San Diego, and includes besides UCI, the University of California, Los Angeles; the University of Illinois at Urbana-Champaign; the University of Michigan; and Stanford University. “The proposed research spans a number of areas of computing – software, hardware, and computer-aided design. Bren School participation recognizes the excellence of our faculty and our tradition of research collaborations across the wide spectrum of modern computing,’’ said Hal Stern, Ted and Janice Smith Family Foundation Dean of the Donald Bren School of Information and Computer Sciences.

|

| First Responders Calit2: Spending 40% less energy on handheld platforms thanks to variability-aware software would be a real boon to medical first responders sharing situational awareness in the field (pictured here on the UC San Diego campus during a county-wide disaster drill at Calit2, home base for the Variability Expedition). |

Variability-aware computing systems would benefit the entire spectrum of embedded, mobile, desktop and server-class applications by dramatically reducing hardware design and test costs for computing systems, while enhancing their performance and energy efficiency. Many in-demand applications – from search engines to medical imaging – would also benefit, but the project’s initial focus will be on wireless sensing, software radio and mobile platforms of all kinds – with plans to transfer advances in these early areas to the marketplace. “The research will provide significant societal benefits, including advances in server-class computing to benefit science itself, and improved energy efficiency of buildings, smart grids and transportation, among other application domains,” added Dutt. “Energy efficiency has become, over the last few years, one of the most critical goals for many industries, especially computing. As such, the advances promised by this expeditions project cannot be overstated. The success of this effort promises to revolutionize the computing industry, with implications that stretch far beyond computing itself, ” said Sandy Irani, chair of the computer science department.

|

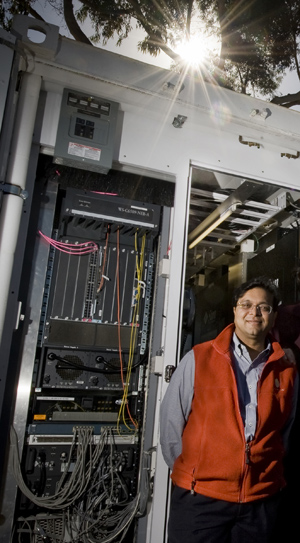

| GreenLight Instrument: Variability Expedition principal investigator Rajesh Gupta in front of the GreenLight Instrument — a modular data center at UC San Diego equipped to gauge the energy efficiency of computing systems like the ones to be developed under the new Expeditions in Computing project. |

To ensure that the project reflects real-world challenges in the computing industry, organizers have recruited a high-powered Technical Advisory Board that initially includes top industry executives from HP, ARM, IBM and Intel. “The Center for Embedded Computing systems (CECS), as the oldest such center in the nation, has a long history of working with key industrial players such as IBM, Intel, HP, etc. in developing new technologies that significantly impact and are directly applicable in the high-tech industry. The Variability Expedition project follows in this tradition and is likely to significantly improve the ability of industries to develop energy efficient and highly resilient software and hardware platforms for a diverse range of applications,” said Dan Gajski, CECS director.

More details about the project are available at http://www.variability.org.